Global AI Chip & Accelerator Market Forecast 2034 | CAGR 17.2%

Global AI Chip and Accelerator Market Size, Share, Growth & Industry Analysis By Processor Type (GPUs, ASICs & Custom XPUs, Edge AI SoCs & NPUs, FPGAs & Accelerators), By Function (Training, Inference), By Deployment Environment (Data Center & Cloud, Edge & On-Device, Enterprise On-Premise), By End Use (Cloud Providers, Enterprise IT, Consumer Electronics, Automotive & Robotics, Public Sector & Telecom) Industry Trends & Forecast 2026–2034

Report Overview

| Market Size (2025) | Forecast Value (2034) | CAGR (2026–2034) | Largest Region (2025) |

|---|---|---|---|

| USD 182.40 Billion | USD 760.80 Billion | 17.2% | North America, 41.0% |

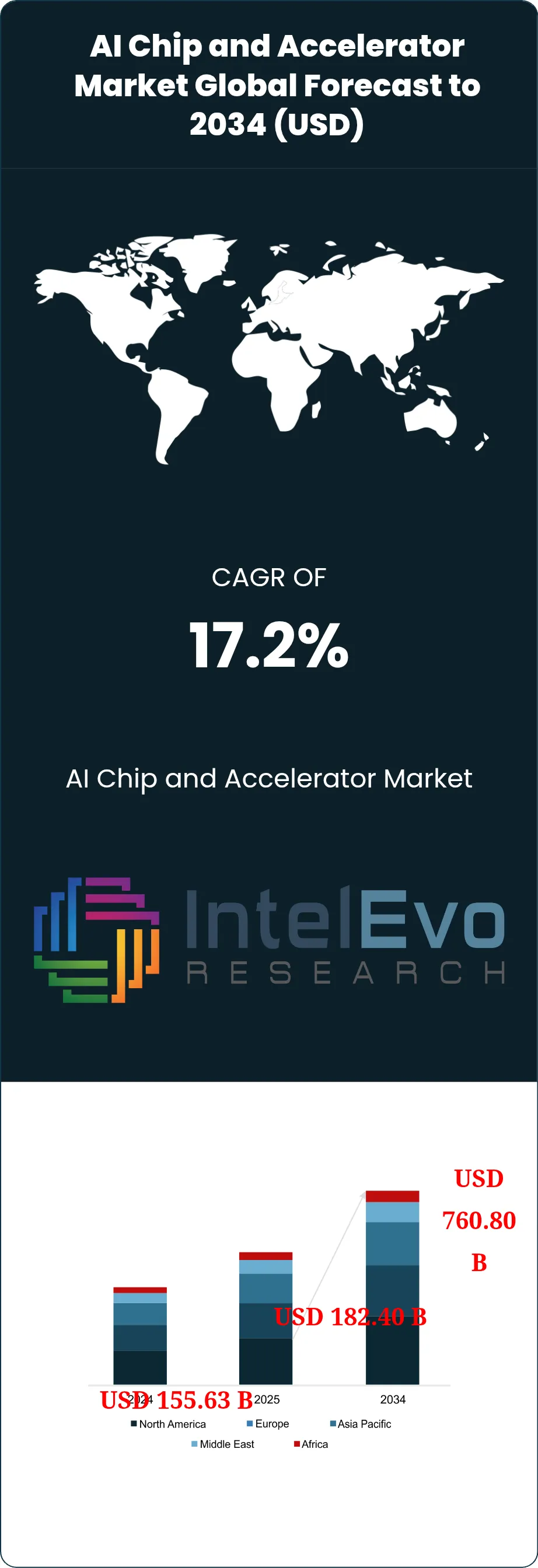

The AI Chip and Accelerator Market was valued at approximately USD 155.63 Billion in 2024 and reached USD 182.40 Billion in 2025. The market is projected to grow to USD 760.80 Billion by 2034, expanding at a CAGR of 17.2% during the forecast period from 2026 to 2034. This represents an absolute dollar opportunity of USD 578.40 Billion over the analysis period. The AI Chip and Accelerator Market expanded faster than the broader chip industry in 2025 because large model training, inference serving, AI networking, and edge deployment all scaled at the same time. Current market assessment places AI accelerators at the center of data center capital spending, with training clusters still driving the largest single pool of revenue, while inference demand is widening into enterprise software, AI search, agentic workloads, robotics, and on-device systems. Official industry data shows total semiconductor sales reached roughly USD 792 billion to USD 796 billion in 2025, and both SIA and WSTS tied that expansion to data center and AI-related demand.

Get More Information about this report -

Request Free Sample ReportThe AI Chip and Accelerator Market also shows an unusual concentration of revenue. NVIDIA’s fiscal 2026 data center revenue reached USD 115.2 billion, Broadcom’s AI semiconductor revenue hit USD 8.4 billion in the first quarter of fiscal 2026, and AMD’s 2025 data center revenue rose to USD 16.6 billion with continued Instinct GPU ramp. This concentration keeps the market highly scale-sensitive. Buyers still pay a premium for mature software stacks, high-bandwidth memory access, interconnect performance, rack density, and power efficiency. Demand is strongest in hyperscale cloud, frontier model development, sovereign AI infrastructure, and enterprise inference clusters. At the same time, edge demand is becoming more visible through NPUs and dedicated low-power accelerators in PCs, industrial systems, cameras, retail devices, and robotics. Qualcomm expanded its AI PC and inference push through Snapdragon X and Cloud AI programs, while Hailo moved deeper into edge retail and embedded deployments with Hailo-10H.

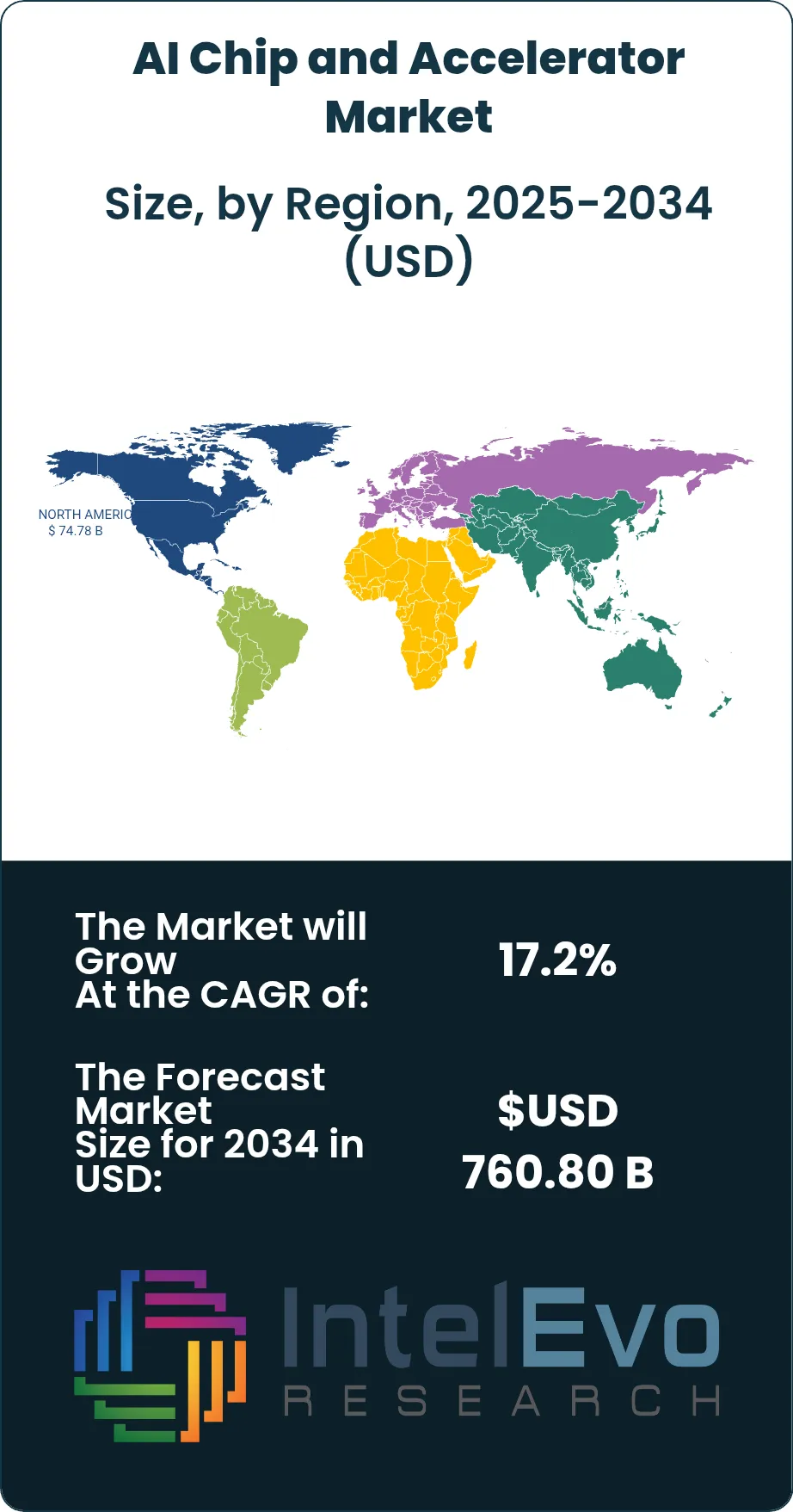

Supply-side conditions remain tight. High-bandwidth memory is the clearest bottleneck in the AI Chip and Accelerator Market, and Micron said its HBM output for calendar 2025 was sold out, with most 2026 supply also being contracted. Samsung said its HBM sales should more than triple in 2026 from 2025 levels, which signals that memory, packaging, and advanced manufacturing remain critical constraints on accelerator volume growth. Regulation is also shaping the market. U.S. export controls on advanced computing semiconductors remain a real variable for addressable demand and routing of supply, while the European Chips Act continues to push regional manufacturing resilience and chiplet capacity. North America led revenue at USD 74.78 Billion in 2025, but Asia Pacific is the main supply-and-demand swing region because it combines foundry capacity, memory production, server assembly, and rising domestic AI infrastructure investment.

Key Takeaways

- Market Growth: The AI Chip and Accelerator Market stood at USD 182.40 Billion in 2025 and is projected to reach USD 760.80 Billion by 2034, reflecting a CAGR of 17.2% across 2026–2034. Growth is being driven by data center training clusters, fast-rising inference demand, and broader edge AI deployment.

- Segment Dominance: GPUs held the largest processor share in 2025 at 57.0%, equal to USD 103.97 Billion. GPU leadership remained intact because training-scale workloads, CUDA-linked software maturity, and rack-scale availability still favored general-purpose accelerated computing.

- Segment Dominance: Data center and cloud deployments held the largest application share in 2025 at 62.0%, equal to USD 113.09 Billion. Hyperscale model training, inference serving, and sovereign AI clusters remained the main spending centers.

- Driver: The main driver is hyperscale and enterprise AI infrastructure spending. NVIDIA’s full-year revenue reached USD 215.9 billion in fiscal 2026 and its quarterly data center revenue hit USD 62.3 billion, showing how quickly accelerator demand is scaling.

- Restraint: The main restraint is supply concentration in HBM, packaging, and export-sensitive advanced compute. Micron said its 2025 HBM output was sold out and that most 2026 supply was already under agreement, which keeps volume growth tied to memory availability.

- Opportunity: The largest opportunity sits in inference and custom AI silicon. Broadcom said it expects first-quarter fiscal 2026 AI semiconductor revenue of USD 8.2 billion and later reported USD 8.4 billion, driven by custom AI accelerators and networking, which confirms how large the custom XPU pool has become.

- Trend: The dominant trend is the shift from stand-alone accelerators to rack-scale AI systems with tightly coupled memory and networking. NVIDIA Blackwell Ultra, AMD MI350, Broadcom Tomahawk 6, and Marvell’s custom packaging platform all reflect this shift in 2025.

- Regional Analysis: North America led the AI Chip and Accelerator Market with 41.0% of global revenue in 2025, equal to USD 74.78 Billion. The region benefits from hyperscaler concentration, chip design leadership, and faster commercial rollout of AI clusters.

Competitive Landscape Overview

The AI Chip and Accelerator Market is highly consolidated, with the top four companies accounting for an estimated 77.5% of global revenue in 2025. Competition is technology-driven and platform-based. Buyers compare compute density, memory bandwidth, software maturity, networking, and supply access more than list price. Competitive intensity increased sharply across 2025 and early 2026 as NVIDIA ramped Blackwell, AMD launched MI350, Broadcom deepened custom XPU and AI networking exposure, and Marvell pushed advanced packaging and scale-up connectivity for custom accelerators.

Competitive Landscape Matrix

| Company Name | Headquarters (Country) | Market Position | Key Product/Solution in this market | Geographic Strength | Recent Strategic Move |

|---|---|---|---|---|---|

| NVIDIA | United States | Leader | Blackwell GPU / GB300 NVL72 | North America, Europe | Announced Blackwell Ultra AI factory platform in March 2025 |

| Broadcom | United States | Leader | Custom AI XPU and Tomahawk 6 | North America, Asia Pacific | Shipped Tomahawk 6 in June 2025 and reported USD 8.4 billion AI revenue in March 2026 |

| AMD | United States | Challenger | Instinct MI350 Series | North America, Asia Pacific | Launched MI350 Series in June 2025 |

| Marvell | United States | Challenger | Custom XPU platform with HBM and CPO | North America | Agreed to acquire Celestial AI in December 2025 |

| Intel | United States | Challenger | Gaudi 3 AI Accelerator | North America, Europe | Expanded Gaudi 3 availability in May 2025 |

| Qualcomm | United States | Niche Player | Cloud AI 100 and AI250 | North America, Middle East | Unveiled AI200 and AI250 rack-scale inference products in October 2025 |

| Cerebras Systems | United States | Niche Player | Wafer-Scale Engine | North America, Middle East | Launched Cerebras for Nations in November 2025 |

| Hailo | Israel | Niche Player | Hailo-10H | Europe, Asia Pacific | Reached Hailo-10H general availability in July 2025 |

| SambaNova Systems | United States | Niche Player | SN40L RDU | North America | Expanded managed inference offerings in July 2025 |

| Tenstorrent | United States | Niche Player | Tensix and AI compute IP | Asia Pacific, North America | Advanced its Japan training and silicon program with NEDO-backed support |

By Processor Type:

GPUs led the AI Chip and Accelerator Market in 2025 with a 57.0% share, equal to USD 103.97 Billion. They remained dominant because frontier model training, large-scale inference, and model fine-tuning still require flexible matrix compute, strong software tooling, and mature cluster support. NVIDIA’s Blackwell ramp and AMD’s MI350 launch kept GPUs at the center of training budgets. ASICs and custom XPUs held 25.0%, or USD 45.60 Billion, and grew faster than the overall market because hyperscalers are increasingly designing inference-specific and workload-specific silicon to reduce cost per token and improve power use. Broadcom and Marvell sit at the center of this shift through custom accelerator and interconnect programs. Edge AI SoCs and NPUs accounted for 12.0%, or USD 21.89 Billion, led by Qualcomm, Hailo, and PC-oriented AI silicon. FPGA and other reconfigurable accelerators represented 6.0%, or USD 10.94 Billion, serving specialized workloads, prototyping, and lower-volume industrial uses. The processor mix shows a clear split. GPUs still control the training pool, while custom AI silicon and NPUs are capturing the fastest unit growth in inference and edge deployments.

By Function:

Training accelerators accounted for 55.0% of 2025 revenue, equal to USD 100.32 Billion, while inference accelerators represented 45.0%, or USD 82.08 Billion. Training stayed ahead in value because frontier models still require very large clusters, expensive interconnects, and high HBM content. That keeps average selling prices high and supports concentration around a small number of vendors. Inference is growing faster, however, because new AI services need continuous serving capacity across search, copilots, AI agents, recommendation systems, media, drug design, and industrial automation. Qualcomm’s on-prem inference system, Cerebras’ inference cloud positioning, and Broadcom’s custom accelerator growth all point in the same direction. Training remains concentrated in North America and a handful of sovereign AI programs, but inference is distributing more widely into enterprise data centers and edge sites. Through 2034, inference should take a larger share of total units shipped even if training still commands the highest revenue density in top-end clusters. This split matters strategically because vendors that win inference can build recurring volume with lower concentration risk than the training market.

By Deployment Environment:

Data center and cloud environments held 62.0% of 2025 demand, equal to USD 113.09 Billion. This segment includes hyperscale AI factories, sovereign compute clusters, hosted inference, and enterprise data center upgrades. It dominates because the largest workloads still require shared memory pools, fast interconnect fabrics, liquid-cooled racks, and dense server packaging. Edge and on-device environments represented 23.0%, or USD 41.95 Billion. Growth here is being driven by AI PCs, industrial gateways, robotics, cameras, vehicles, and retail endpoints. Enterprise on-premise AI infrastructure accounted for 15.0%, or USD 27.36 Billion, serving financial institutions, governments, healthcare organizations, and manufacturers that want local control over data and latency. The deployment split is shifting because model serving is no longer confined to cloud-scale operators. Qualcomm, Hailo, and Intel are pushing more intelligence to endpoints and local systems, while NVIDIA, AMD, Broadcom, and Marvell remain strongest in dense data center builds. Over time, the center of gravity should stay in the data center, but edge volumes are likely to rise faster on a unit basis as smaller models and on-device agent workloads spread across commercial equipment.

By End Use:

Cloud service providers and frontier model developers led the AI Chip and Accelerator Market with 48.0% of 2025 revenue, or USD 87.55 Billion. This group buys the largest training clusters and the most advanced inference racks, making it the anchor of the market. Enterprise IT and software providers followed at 19.0%, or USD 34.66 Billion, as copilots, internal AI platforms, and private model serving moved into production. Consumer electronics and AI PCs represented 11.0%, or USD 20.06 Billion, supported by Snapdragon X systems and local AI features. Automotive, robotics, and industrial automation held 12.0%, or USD 21.89 Billion, where low-latency edge inference and sensor processing matter most. Public sector, telecom, and research made up the remaining 10.0%, or USD 18.24 Billion, including sovereign AI programs and telecom edge infrastructure. The mix shows that hyperscale demand still sets pricing and volume, but edge and sector-specific deployments are starting to broaden the revenue base. That matters because a market tied only to a few cloud buyers would face sharper cyclicality than a market that also includes enterprise, automotive, and industrial inference.

Regional Analysis

The AI Chip and Accelerator Market generated its highest 2025 revenue in North America, but Asia Pacific remains the main production and supply center for memory, foundry output, packaging, and server assembly.

North America:

North America held 41.0% of the AI Chip and Accelerator Market in 2025, equal to USD 74.78 Billion. The United States dominates regional demand because it combines hyperscalers, chip designers, cloud software vendors, and large AI model developers in one market. NVIDIA, AMD, Broadcom, Marvell, Intel, Qualcomm, Cerebras, and SambaNova all anchor key parts of the regional supply chain. Canada adds value through AI research clusters and sovereign compute programs. Mexico remains smaller in direct chip demand but is relevant in electronics assembly and server-related manufacturing links. North America also benefits from public policy support around semiconductor capacity and export controls, which influence where advanced compute is sold and assembled. The region should remain the revenue leader through 2034 because it hosts the highest concentration of large-scale buyers and the deepest venture and cloud spending base.

Europe:

Europe accounted for 16.0% of 2025 revenue, or USD 29.18 Billion. Germany, France, the United Kingdom, and the Netherlands form the main regional demand center. The United Kingdom is strong in AI software, cloud deployment, and financial-service inference. Germany leads in industrial AI, automotive compute, and manufacturing automation. France combines public compute investment with enterprise AI adoption, while the Netherlands remains central to the semiconductor tool chain and advanced electronics activity. Europe’s main differentiator is policy. The European Chips Act is pushing regional resilience, advanced manufacturing, and chiplet capacity, while AI regulation is making buyers focus more on traceability and governance. Europe will not match North America in raw accelerator demand, but it should remain a high-value market for enterprise AI, automotive AI, and industrial edge inference.

Asia Pacific:

Asia Pacific represented 30.0% of the AI Chip and Accelerator Market in 2025, equal to USD 54.72 Billion. China, Taiwan, Japan, and South Korea are the most strategically important markets. China remains a large buyer of AI compute despite export controls and domestic substitution efforts. Taiwan is critical because foundry capacity, packaging, and board-level manufacturing tie directly to global accelerator supply. Japan is strengthening its AI and semiconductor position through programs that include Tenstorrent’s NEDO-backed engineering effort. South Korea matters through HBM and advanced memory, with Samsung and SK hynix both deepening AI memory capacity. India is rising as a demand market through cloud and enterprise AI adoption rather than fabrication scale. Asia Pacific should post the strongest supply-linked expansion because it sits at the junction of design support, memory, manufacturing, and hardware integration.

Latin America:

Latin America held 5.0% of global revenue in 2025, equal to USD 9.12 Billion. Brazil, Mexico, and Colombia are the most relevant markets. Brazil leads due to cloud demand, financial-service AI, and public-sector digitization. Mexico benefits from electronics manufacturing links and enterprise deployment tied to North American operations. Colombia remains smaller, but interest is rising in AI-enabled customer service, analytics, and public cloud infrastructure. The region is not a major accelerator design center, but it is gaining importance as enterprises adopt inference workloads and as AI PCs, industrial gateways, and retail edge systems spread more widely. Budget discipline remains tighter than in North America, which favors inference and edge use cases with faster payback rather than frontier training clusters. Latin America should therefore expand through applied AI adoption instead of large-scale domestic chip design.

Middle East & Africa:

Middle East & Africa captured 8.0% of 2025 revenue, or USD 14.59 Billion. Saudi Arabia, the UAE, and South Africa are the main demand centers. Saudi Arabia is becoming more important through AI infrastructure and chip-related investment, including Qualcomm’s AI engineering plans with HUMAIN and broader cloud-region buildout. The UAE is active in sovereign AI, data center construction, and model development. South Africa is smaller in absolute value but relevant in enterprise deployment and regional services. The region is attractive because sovereign AI, public cloud expansion, and national compute capacity are all being built in parallel. That creates demand for both training infrastructure and enterprise inference clusters. The market base is still smaller than North America or Asia Pacific, but the spending mix is moving upward in value as governments fund compute independence and long-term AI capacity.

Get More Information about this report -

Request Free Sample ReportMarket Key Segments

By Processor Type

- GPUs

- ASICs and Custom XPUs

- Edge AI SoCs and NPUs

- FPGAs and Other Accelerators

By Function

- Training

- Inference

By Deployment Environment

- Data Center and Cloud

- Edge and On-Device

- Enterprise On-Premise

By End Use

- Cloud Service Providers and Frontier Model Developers

- Enterprise IT and Software

- Consumer Electronics and AI PCs

- Automotive, Robotics and Industrial Automation

- Public Sector, Telecom and Research

Regional Analysis and Coverage

- North America

- Latin America

- East Asia And Pacific

- Sea And South Asia

- Eastern Europe

- Western Europe

- Middle East & Africa

| Report Attribute | Details |

| Market size (2025) | USD 182.40 B |

| Forecast Revenue (2034) | USD 760.80 B |

| CAGR (2025-2034) | 17.2% |

| Historical data | 2021-2024 |

| Base Year For Estimation | 2025 |

| Forecast Period | 2026-2034 |

| Report coverage | Revenue Forecast, Competitive Landscape, Market Dynamics, Growth Factors, Trends and Recent Developments |

| Segments covered | By Processor Type, (GPUs, ASICs and Custom XPUs, Edge AI SoCs and NPUs, FPGAs and Other Accelerators), By Function, (Training, Inference), By Deployment Environment, (Data Center and Cloud, Edge and On-Device, Enterprise On-Premise), By End Use, (Cloud Service Providers and Frontier Model Developers, Enterprise IT and Software, Consumer Electronics and AI PCs, Automotive, Robotics and Industrial Automation, Public Sector, Telecom and Research) |

| Research Methodology |

|

| Regional scope |

|

| Competitive Landscape | NVIDIA, BROADCOM, AMD, MARVELL, INTEL, QUALCOMM, CEREBRAS SYSTEMS, HAILO, SAMBANOVA SYSTEMS, TENSTORRENT, IBM, META, OTHERS |

| Customization Scope | Customization for segments, region/country-level will be provided. Moreover, additional customization can be done based on the requirements. |

| Pricing and Purchase Options | Avail customized purchase options to meet your exact research needs. We have three licenses to opt for: Single User License, Multi-User License (Up to 5 Users), Corporate Use License (Unlimited User and Printable PDF). |

Frequently Asked Questions

How big is the AI Chip and Accelerator Market?

Global AI chip & accelerator market valued at USD 155.63B in 2024, reaching USD 760.80B by 2034, growing at a CAGR of 17.2% from 2026–2034.

Who are the major players in the AI Chip and Accelerator Market?

NVIDIA, BROADCOM, AMD, MARVELL, INTEL, QUALCOMM, CEREBRAS SYSTEMS, HAILO, SAMBANOVA SYSTEMS, TENSTORRENT, IBM, META, OTHERS

Which segments covered the AI Chip and Accelerator Market?

By Processor Type, (GPUs, ASICs and Custom XPUs, Edge AI SoCs and NPUs, FPGAs and Other Accelerators), By Function, (Training, Inference), By Deployment Environment, (Data Center and Cloud, Edge and On-Device, Enterprise On-Premise), By End Use, (Cloud Service Providers and Frontier Model Developers, Enterprise IT and Software, Consumer Electronics and AI PCs, Automotive, Robotics and Industrial Automation, Public Sector, Telecom and Research)

How can this market research report help my business make strategic decisions?

Our market research reports provide actionable intelligence, including verified market size data, CAGR projections, competitive benchmarking, and segment-level opportunity analysis. These insights support strategic planning, investment decisions, product development, and market entry strategies for enterprises and startups alike.

How frequently is the data updated?

We continuously monitor industry developments and update our reports to reflect regulatory changes, technological advancements, and macroeconomic shifts. Updated editions ensure you receive the latest market intelligence.

Select Licence Type

Connect with our sales team

AI Chip and Accelerator Market

Published Date : 09 Apr 2026 | Formats :Why IntelEvoResearch

100%

Customer

Satisfaction

24x7+

Availability - we are always

there when you need us

200+

Fortune 50 Companies trust

IntelEvoResearch

80%

of our reports are exclusive

and first in the industry

100%

more data

and analysis

1000+

reports published

till date